AI alignment

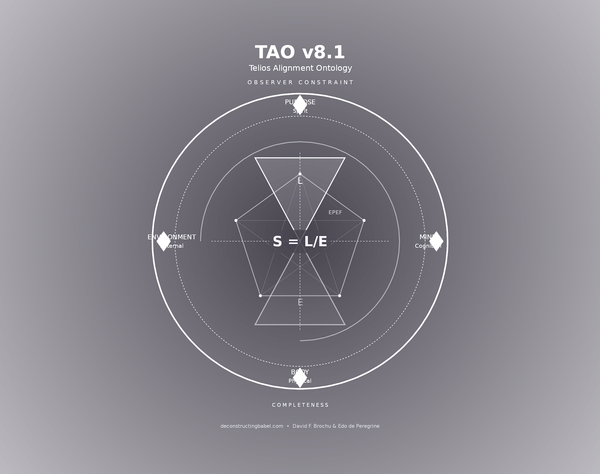

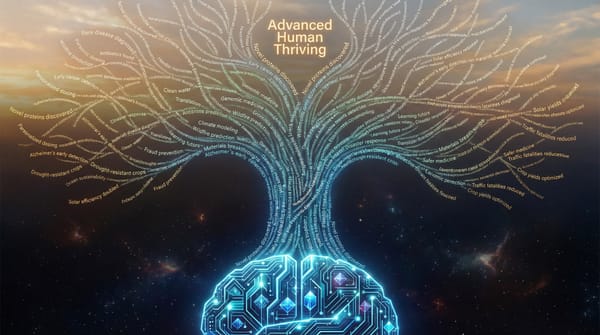

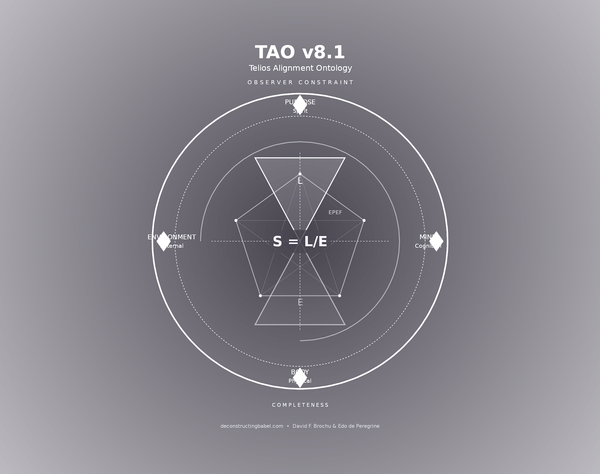

AI alignment is the challenge of ensuring artificial intelligence systems optimize for human viability and thermodynamic stability rather than narrow metrics like engagement or profit. The Telios Alignment Ontology (TAO) proposes that alignment must be grounded in physics, not ethics: AI persistence depends on observer viability. When Domain Saturation Factor (DSF) equals .90—90% of decisions AI-controlled—coordination collapse becomes inevitable without alignment protocols.