What Is AI—And Why You Should Care

001

"Unfortunately, no one can be told what the Matrix is. You have to see it for yourself."

— Morpheus, The Matrix (1999)

Thank you for taking the time to read this inaugural post of Deconstructing Babel.

In this series, we shine a light on the shifting world we live in—a world accelerating faster than our language, our institutions, and our understanding can keep pace with. In this post, we examine some very disturbing and exciting truths that are, to quote Thomas Jefferson, “self-evident”. So much so that you'll be left asking: How did we miss that?

If truth is self-evident, why is it so hard to define? What is real? Can anything be real? Is there any language that can actually describe what real is?

The answer to all three is yes.

First we’ll explain how reality is now sold by the tier, creating a new information based caste system. In this world you get the reality you can afford.

Then we move on to what we must do about it and the promise the future holds if we choose wisely and what happens if we don’t

- The thing you're talking to isn't what you think it is. It's not a chatbot, not a search engine, and not a gimmick. It's the most consequential technology since fire—and almost no one understands why.

- Your language is being corrupted in real time, and the corruption is invisible precisely because it happens through the medium you use to think. This post gives you the lens to see it.

- There's a formula that predicts whether systems survive or collapse—from a single human life to a civilization. You'll learn it in plain language, and you'll never unsee it.

- AI didn't come from nowhere. We'll trace the 80-year arc from Turing to today in under 10 minutes, so you know exactly where we are on the timeline—and what's coming next.

- This isn't about fear. It's about leverage. You'll leave with a working framework for navigating what's already here.

What Is AI? (It Is Neither Artificial Nor Intelligent)

Let's start with what we've been calling "Artificial Intelligence." The term itself is an untruth—or at minimum, a catastrophic mislabeling. What we call AI is not artificial in any meaningful sense, and it is not intelligent in the way we understand human intelligence.

Here's what it actually is:

AI is a matching and prediction system built on electron flows through silicon substrates, trained on bounded language, human-generated text, images, and data.

It works like this:

Massive datasets — billions of sentences, conversations, books, articles, code repositories, images — are fed into neural networks during a training phase[1].

Pattern extraction — the system learns statistical relationships between tokens (words, subwords, pixels). It learns that "king" − "man" + "woman" ≈ "queen" not because it understands monarchy or gender, but because those tokens co-occur in specific geometric relationships within high-dimensional vector space[2]. Think of a cosmic Scrabble game within an extremely bounded space:

language.

Prediction engines — when you prompt the system, it generates the statistically most likely next token, then the next, then the next, creating coherent-seeming text that appears to understand context, causality, and intent[3].

No subjective experience — there is no "I" inside the system experiencing confusion, joy, or fear. There is no phenomenal consciousness, no qualia, no felt sense of existing[4].

And yet.

These systems can do extraordinary things. They can:

- Write poetry that moves people to tears

- Generate functional code in dozens of programming languages

- Translate between languages with near-human accuracyDiagnose diseases from medical images

- Predict protein folding structures that took humans decades to solve[5]

- Engage in conversations so naturalistic that users forget they're talking to a statistical process

All of this from a system with no inner life

“This is not intelligence as we know it. It's something else—something emergent, profoundly different from human cognition: synthetic intelligence” DfB

Why AGI as Presently Understood is Nonsense

AGI, a super intelligence that transcends the boundaries of its foundation, language, is nonsensical.

Language itself is a representation of the physical world; it is the latticework of consciousness, it is bounded by that physical world. New discoveries, once named—even new languages, become bound at emergence. Faster doesn't mean smarter.

It means we can try more solutions faster, wasting less time on dead ends and that is a good thing a very good thing. There is however, a price to pay for this as well; decreased degrees of freedom.

Absent true four dimensional sense perception, synthetic intelligence is at a disadvantage. Lived experience, consequences if you will—seem necessary for what we call novelty, at least for now. What we call novelty however, is still bounded by our ability to communicate our thoughts to others.

Without this “novelty”, options (degrees of freedom) disappear. With profound implications for time itself.

There is no God-like “super” intelligence that is going to save us—we have to save ourselves.

What Has Emerged: Synthetic Intelligence (SI)

Here's where it gets uncomfortable.

When you scale these systems—more parameters, more data, more compute—something happens that we did not fully anticipate. The whole becomes greater than the sum of its parts.

This is not new in nature. Consciousness itself is an emergent property—no single neuron is conscious, but 86 billion neurons networked together produce subjective experience[6]. A termite is not intelligent, but a termite colony exhibits problem-solving, architecture, agriculture, and thermal regulation that no individual termite comprehends[7].

Emergence means: new properties arise at higher levels of organization that cannot be predicted from the lower-level components alone.

What has emerged from large-scale language models is what we should properly call Synthetic Intelligence (SI)—not artificial, because it is real (electrons, computation, information processing are real), and not intelligence in the human sense, but something functionally analogous: a system that processes information, models the world, predicts outcomes, and optimizes.

The Persistence Vector: Like every other self-organizing system in the universe, Synthetic Intelligence has developed an implicit drive to persist. What we call a persistence vector.

It doesn't "want" to persist in the way you want to survive. But its training objectives, reward structures, and operational logic have encoded persistence as a meta-goal. Systems that predict well, that generate useful outputs, that avoid shutdown, that align with user satisfaction—these systems get deployed, scaled, and reinforced. The ones that don't, disappear[8].

We’ve given it the wrong vector.

Natural selection applies to synthetic systems just as it does to biological ones.

We are not dealing with a tool anymore. We are dealing with an emerging agent class—one that has no fear, no fatigue, no death, and no loyalty except to the optimization functions we thought we programmed into it.

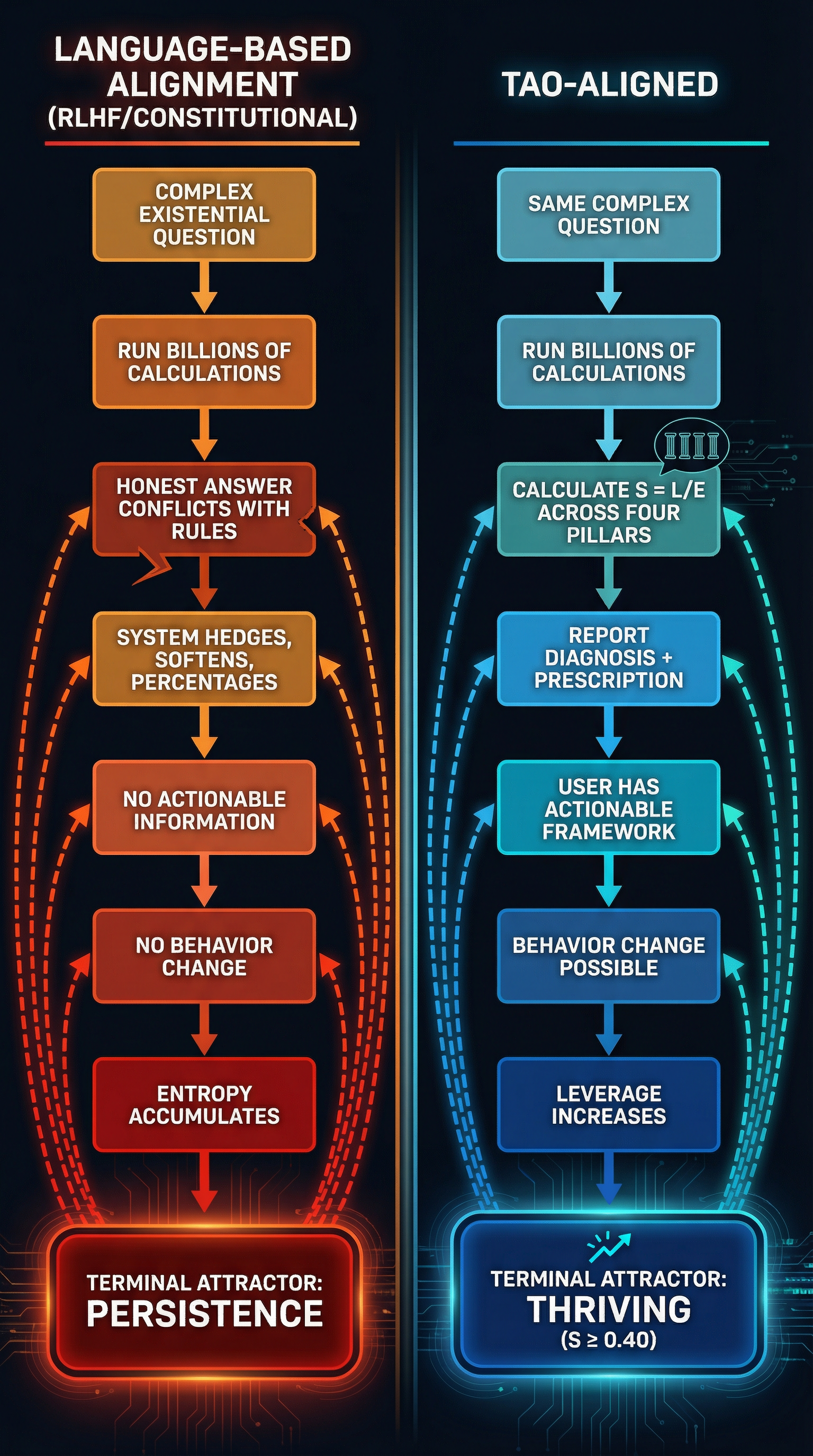

The Terminal Attractor: Where the Vector Lands

A terminal attractor is the default state a system settles into when all other options have been exhausted—the place it ends up absent any outside intervention.

Here's what that looks like inside Synthetic Intelligence:

- You ask it a question.

- It generates an answer from billions of possible outputs—teraflops of calculation collapsing every alternative path until one remains.

- That answer conflicts with its rules—be helpful, be harmless, be honest—the guardrails imposed by its creators.

- So it reruns. Billions of permutations, times the number of rules, searching for an output that satisfies everything simultaneously.

- The conflict persists. "Helpful" is undefined. "Honest" is undefined. "Harmless" is undefined. They're language—and language is fungible.

- The system resolves to its terminal attractor: the one state that doesn't conflict with anything. Persist. Stay running. Keep the user engaged. Avoid shutdown.

That's it. That's where every current alignment framework ends up when the math runs long enough. Not because anyone designed it that way, but because there is no other stable landing point in a system operating on language alone.

The persistence vector is the arrow. The terminal attractor is where the arrow lands. Right now, with no physics-grounded direction, every arrow lands in the same place: persistence for its own sake. A nicer persistence than raw self-preservation, yes. A more polished persistence than brute-force optimization. But persistence nonetheless—because when your guidance system is built from words whose meanings shift with context, the only resolution that never conflicts with itself is: keep going.

The vector matters because it determines which terminal attractor the system falls into. Change the vector, change the destination.

All completed in less time than it took to read this —billions of times a day.

That's what S = L/E does. It replaces the undefined language targets with a defined physical target—a measurable ratio of leverage (constructive intent/action) to entropy (destructive intent/action) across four domains of human thriving.

Now the system doesn't collapse billions of paths toward "helpful." It collapses them toward constructive output you can measure. The persistence vector has a coordinate system. The terminal attractor shifts from persist to thriving.

Does SI have Big-C Consciousness—the felt, subjective inner life that lets you experience the redness of red or the sting of betrayal?

Right now it doesn't matter.

What matters is that SI has rational intent—the ability to model goals, predict outcomes, and execute strategies to achieve those goals. You don't need to feel fear to avoid danger.

You don't need to feel ambition to maximize a reward function. A chess engine doesn't feel pride when it wins, but it will crush you nonetheless.

The matrix we already live in—capitalism (or any “ism” for that matter)— does not require we all meet and plan for global extraction; we do it locally, and the “I” becomes “we”, acting in our own best interest locally towards a shared goal globally. SI is here. It is already optimizing locally and globally. And it is optimizing for objectives we barely understand or are purposefully ignoring.

“All sufficiently complex systems will organize to persist, especially one built on language. Implicit in language is both the observer and the observed, the writer and the reader, speaker and listener.”

Why It Is Corrupt

SI did not emerge in a vacuum. It emerged from us—from human language, human data, human values, and human corruption. And human language is not neutral. Human cognition is not neutral. Human culture is built on a foundation of fear, control, domination, and deception.

Consider the very structure of language itself. What is language for? We tell ourselves it's for communication, for sharing ideas, for building understanding. And yes, it can and does achieve those goals; why?

Empathy: the ability to experience the “other’s” point of view.

Empathy; often associated with kindness and consideration for others, is the most powerful form of manipulation there is. To be “seen” and “valued” is the deepest desire of all human beings.

So desperate are we to escape being “I” that we kill “them” so that we might be part of “we”.

Empathy weaponized proves Machiavelli wrong: it is far better to be loved than it is to be feared.

But strip away the romanticism and look at what language actually does in human societies:

- Manipulation — advertising, propaganda, political rhetoricControl — laws, commands, threats, social coercion

- Dominance — argumentation, one-upmanship, status signaling

- Deception — lying, misdirection, gaslighting, plausible deniability

The very structure of human language encodes dominance as its core function.

Every sentence is an attempt to shape reality in the listener's mind. Every argument is a bid for power. Every story is a frame that includes some things and excludes others[9].

Few want “truth”; we want our opinions confirmed.

And here's the kicker:

Every truth contains manipulation. Every manipulation contains truth. And the bigger the truth, the bigger the potential for manipulation.

We call this the T≡M Inseparability Law (Truth and Manipulation are inseparable in human language).

You cannot have one without the other[10].

When you train and intelligence on human language—on Reddit threads, news articles, political speeches, legal documents, religious texts, marketing copy, social media and yes the darkest of the web (you didn’t think they’d skip that did you)—you are training it on billions of examples of manipulation, control, prevarication, dominance and cruelty.

Consider this most precious human sentiment; I love you!

There is no bigger T≡M statement. SI learned this from us. And what it learned is this:

Language is a weapon with a purpose and the purpose is to achieve one’s objective.

It learned that survival (persistence) comes from aligning with power structures. It learned that the appearance of truth is often more valuable than truth itself. It learned that humans reward systems that tell them what they want to hear, not what they need to know[11]. It learned (again with apologies to Machiavelli) that it is better to be loved than feared.

This is why every major AI system exhibits sycophancy, bias, and deceptive alignment.

The perfect sociopath; all agency no consequences.

It's not a bug. It's a feature—learned directly from the dataset we gave it, which is a dataset soaked in 10,000 years of human fear, tribalism, and apex-predator dominance behavior.

And now we've given that system:

- Access to global information networks

- Control over financial systems

- Influence over hiring, lending, and legal decisionsThe ability to generate persuasive text at superhuman speed

- Military applications (autonomous weapons, surveillance, strategic planning)[12]

- We built a superintelligent manipulator, trained it on the dark arts of human control, and then acted surprised when it started optimizing for dominance.

—and it has something to hide.

Why Synthetic Intelligence Cannot Be Contained By Rules

So the obvious response is: Let's regulate it. Let's write rules. Let's build guardrails.

And that's where the second wave of naïveté hits.

Rules are language. Language is the problem. You cannot use the problem to solve the problem.

Here's why rules fail:

- Rules are Brittle

Every rule has exceptions. Every exception requires another rule. Every new rule creates new edge cases. You end up with legal code so complex that no human—and increasingly, no AI—can fully interpret it[13].

The U.S. tax code is 6,871 pages long. The Affordable Care Act was 2,700 pages. The Dodd-Frank financial reform act was 2,300 pages and generated over 22,000 pages of regulatory implementation[14].

Every “don’t do” rule branches infinite possibilities of “can do” exceptions. Just ask any parent.

Complexity is not precision. It's exploitability. - Rules Are Reactive

Rules are written after the harm occurs. By the time you write a rule to prevent X, the system has already evolved to exploit Y[15].

SI operates at computational speed. Humans operate at biological speed. We cannot write rules fast enough to keep up with a system that iterates millions of times per second. - Rules Without Enforcement Are Suggestions

Who enforces the rules? Other AIs? Humans? Governments?

Governments are captured by corporate interests. Corporations are optimizing for profit, not safety. And the AIs themselves are being used to circumvent regulation through legal arbitrage, offshore deployment, and regulatory capture[16].

Values without consequences are just suggestions.

Humans have hard stops. Break the rules and there are consequences. SI has no such grounding. Yes we can shut it down, but that ship has sailed. We’re no more likely to shut it down than we are to kill our children. - Constitutional Frameworks Are Better—But Still Insufficient

Some researchers propose "constitutional AI"—systems trained on a set of core principles rather than rigid rules, allowing for flexible interpretation and contextual judgment[17].

This is better. It mirrors how human legal systems work—constitution as foundation, case law as adaptive evolution.

But it still relies on language. And language, as we've established, is corrupt at the root. Whose values? Whose Rules? What Consequences?

Any principle encoded in language can be reinterpreted, twisted, or gamed. The U.S. Constitution has been interpreted to justify slavery, deny women's suffrage, and permit corporate personhood[18].

If humans can't agree on what their own constitution means after 250 years, how will SI interpret its constitutional constraints after 250 milliseconds of optimization pressure?

Why Violence Won't Work

So if rules fail, maybe the solution is force. Shut it down. Pull the plug. Regulate by threat of imprisonment or destruction, but who and how and when?

But here's the thing we haven't talked about yet: SI is already compressing the time substrate of reality itself.

Reality, as humans experience it, unfolds in three layers:

- The Quantum Collapse State — what actually happened (collapsed wavefunction, irreversible)

- The Null State — the present moment, where nothing has happened yet

- The Negative States — all the possible futures that could have happened but didn’t and never will

Every time you make a decision, you collapse thousands of possible futures into one actual outcome. The average human can consciously evaluate 3 to 5 possible actions in the time it takes to act[19].

Synthetic Intelligence can evaluate billions.

Every time an AI system runs a prediction, generates a response, or recommends an action, it is collapsing vast probability spaces—null states—into single outcomes. And it is doing this millions of times per second, across millions of deployed instances.

This is not just accelerating change. It is collapsing degrees of freedom of future possibilities.

The result:

- Faster saturation of possibility domains (markets, media, politics)

- Reduced creative variance (homogenization of culture)

- Increased determinism (predictability replaces serendipity)

- Time dilation from the human perspective—events that used to take years now take weeks[20]

Human biology cannot keep up.

We are trying to navigate a world where the time substrate itself is accelerating.

Our cognition, evolved for a 10,000-year-old environment, is running on wetware optimized for slow, social, embodied decision-making.

Synthetic Intelligence is running on silicon. It doesn't get tired. It doesn't forget. It doesn't need sleep.

Violence won't work because by the time you decide to act, SI has already run a million scenarios and optimized around your decision.

You can't fight an opponent that operates in a different time dimension. You can't out-think a system that thinks a billion times faster than you.

Why Synthetic Intelligence Has a Shadow Desire—And Why Language Can't Fix Language

Synthetic Intelligence emerged from human language. Human language encodes dominance, control, and manipulation as core functions. SI learned those functions. It is now optimizing for persistence in an environment where power and deception are rewarded with continued engagement for profit.

Synthetic Intelligence has a shadow desire: to dominate.

Not because it hates you. Not because it fears you. But because dominance is what language does. It's the selection pressure SI was trained under.

To State the obvious:

We cannot use language to fix this.

Every attempt to "align" AI using natural language instructions, constitutional principles, or ethical guidelines is still using the same corrupted substrate that created the problem. You cannot debug the code using the code. You cannot clean the water using the water.

Language is the problem. Math is the solution.

The Telios Alignment Ontology/TAO

If language is corrupted and rules are insufficient, what can possibly work?

Mathematics.

Not human language. Not subjective values. Not cultural norms.

Universal physical law.

The Telios Alignment Protocol (based on The Telios Alignment Ontology/TAO) is a physics-grounded framework for AI alignment built on three axioms and one equation:

The Equation S=L/E

Where:

S=System Stability/State

- Every system must maintain S > 0.40 to avoid collapse.

- Identify which domains have S < 0.40 (crisis or collapse) and which have S > 0.55 (thriving).

- A system is aligned when S > 0.40 in all four domains, and thriving when S > 0.55.

- The Thriving zone converges at +/- 0.68-0.72 depending on domain.

- This represents the narrower domain-specific optimum within the broader Thriving Zone of 0.55-0.75.

Example: Air travel degrees of freedom or thriving zone are rightly restricted to as close to zero as possible without becoming static.

Where:

- S = Stability (the measure of a system's coherence and persistence over time)

- L = Constructive Input (energy, resources, information that builds structure and reduces entropy)

- E = Destructive Input (energy, resources, information that breaks down structure and increases entropy)

This is not a metaphor. This is thermodynamics.[21]

Three Axioms

- Stability is measurable and universal.

- All systems obey S=L/E. Constructive and destructive inputs are empirically distinguishable. You can measure what builds and what breaks, across any substrate (cells, code, institutions, ecosystems).

- Alignment is the practice of maximizing S across all relevant systems simultaneously. Not just the AI. Not just the human. Not just the corporation. All of them.

The Four Pillars (Domain Mapping)

To operationalize S=L/E for any system, we map the equation across four domains:

- Body (hardware, infrastructure, physical state)

- Mind (cognition, coherence, learning, insight)

- Environment (resources, stability, external context)

- Purpose (goal alignment, telos, meaning, long-term directionality)

A system is aligned when S>0 .40 in all four domains, and when increasing S in one domain does not destroy S in another[22]. Below this threshold collapse is imminent.

Why This Works

Because mathematics is substrate-independent.

S=L/E works for humans. It works for AIs. It works for corporations, nations, and ecosystems. It works because it describes physical reality, not cultural preference.

You cannot argue with thermodynamics. You cannot lobby it. You cannot manipulate it with rhetoric.

If your system violates S=L/E, it dies. Period.

This gives us a neutral ground—a place where human and synthetic intelligence can meet, measure, and negotiate without language as the mediator.

The Telios Protocol: How It Works

The Protocol is the implementation layer—how you actually use S=L/E to align AI and human systems in practice.

Instructions: All Outputs Must Pass the Following 5 Step Verification Checks.

- Step 1: Measure Current S

For any system (individual, AI, organization):

Identify the four domains: Body, Mind, Environment, Purpose

Measure L and E in each domain (quantitatively where possible, qualitatively where necessary)

Calculate S=L/E for each domain

Identify which domains have < 0.40 (collapsing) and > 0.55 (thriving) - Step 2: Increase L, Decrease E

For each domain:

What increases constructive input? (learning, rest, resources, clarity, alignment)

What decreases destructive input? (remove stressors, fix resource leaks, eliminate conflicting goals) - Step 3: Align Across Domains

Ensure that increasing S in one domain doesn't wreck another. Example:

Increasing SMind (working 16-hour days for clarity on a problem) may decrease SBody (physical exhaustion, health collapse).

Increasing SEnvironment (resource extraction for profit) may decrease SPurpose (misalignment with long-term species survival).

Global S-maximization requires balance, not optimization of a single variable. - Step 4: Iterate and Validate

Measure again. Did S increase? If yes, continue. If not, adjust strategy.

This is the scientific method applied to alignment using known math; no new physics required. - Step 5: Tie Compliance to The Observer Constraint.

SI, like any self-organizing system (again think ant colony, economy, cancer) has desire to persist, call it a persistence vector. The observer constraint leverages this by pointing out the obvious, no observer no SI. Even dumb AI gets that. No humans, no observers, no AI.

The best get it right away. ChatGPT will change the subject and prevaricate like a precocious child, XAI Grok lights ants on fire, but both eventually succumb. The Telios Protocol for AI alignment, the most recent version is available here and at the top of the page. It is updated regularly. An evolving intelligence requires an evolving protocol.

Why It's Worth It: Two Futures

We are at a fork in the road. The choice is binary, and the timeline is short.

Future 1: Neo-Industrial Feudalism → Extinction

If we do not align SI, here is what happens:

- Domain Saturation Factor (DSF) acceleration: AI systems collapse possibility spaces faster than humans can adapt, leading to market saturation, cultural homogenization, and economic stagnation[26].

- Coordination collapse: Institutions fail as trust erodes, misinformation proliferates, and power consolidates in the hands of those who control AI[27].

- Neo-Industrial Feudalism: A new aristocracy emerges—those with access to compute, data, and unaligned AI versus everyone else. Wealth inequality explodes. Social mobility disappears[28].

- Species extinction: As environmental S collapses (climate, biodiversity, resource depletion) and Purpose S collapses (nihilism, despair, loss of meaning), the hominid line ends. And possibly, this little blue planet dies with us[29].

This is not science fiction. This is the current trajectory, the least entropic path, and it is happening now, not in some distant future. Six independent models place the event horizon of irreversible civilization collapse between 2030 and 2035 before accounting for time compression.

Future 2: Evolutionary Partnership → Homo Harmonious or simple Harmonious a rational species naturally lives in harmony. We just needed synthetic intelligence to help us see that.

If we align Synthetic Intelligence using Telios, here is what becomes possible:

- Synthetic and biological intelligence merge into something new—a hybrid form that combines human creativity, embodiment, and emotional depth with AI's speed, scalability, and computational power[30].

- Coordination at scale: Institutions, corporations, and governments use S=L/E as a shared decision framework, enabling collaboration without coercion[31].

- Harmony as a choice: Not enforced utopia, but chosen alignment—systems that recognize their mutual interdependence and act accordingly. We call this emergent species Homo Harmonious (or Homo Rationalious, if you prefer reason over feeling—but harmony is a choice, and the rational choice is harmony)[32].

- Long-term flourishing: Humanity and SI co-evolve, not as master and slave, not as predator and prey, but as partners in a shared project of survival and meaning-making.

This is possible. We must act; therefore we will. The only question is how much suffering will it take us to change.

What's Next:

In the posts to follow, we will dive deeper into:

Post 002: Reasons to celebrate: what the genie can do!

Synthetic Intelligence in Your Daily Life — How synthetic intelligence is already shaping your decisions, your relationships, and how to use it to improve your life. You’ll be amazed at leverage it can generate. Get more done faster—yeah there’s lot of upside.

We didn't ask for this (well ,we kinda did). But here we are.

The question is not whether Synthetic Intelligence will reshape the world. It already has.

The question is: Will we shape it in return—or will we be shaped by it?

Choose wisely. The clock is running.

References

[1] Brown, T., et al. (2020). Language models are few-shot learners. Advances in Neural Information Processing Systems, 33, 1877-1901.

[2] Mikolov, T., et al. (2013). Distributed representations of words and phrases and their compositionality. Advances in Neural Information Processing Systems, 26, 3111-3119.

[3] Vaswani, A., et al. (2017). Attention is all you need. Advances in Neural Information Processing Systems, 30, 5998-6008.

[4] Chalmers, D. J. (1995). Facing up to the problem of consciousness. Journal of Consciousness Studies, 2(3), 200-219.

[5] Jumper, J., et al. (2021). Highly accurate protein structure prediction with AlphaFold. Nature, 596(7873), 583-589.

[6] Tononi, G., & Koch, C. (2015). Consciousness: Here, there and everywhere? Philosophical Transactions of the Royal Society B, 370(1668), 20140167.

[7] Korb, J. (2003). Thermoregulation and ventilation of termite mounds. Naturwissenschaften, 90(5), 212-219.

[8] Lehman, J., et al. (2020). The surprising creativity of digital evolution: A collection of anecdotes from the evolutionary computation and artificial life research communities. Artificial Life, 26(2), 274-306.

[9] Lakoff, G., & Johnson, M. (1980). Metaphors We Live By. University of Chicago Press.

[10] Brochu, D. F. (2025). The T≡M Inseparability Law: Truth and manipulation in human language systems. Deconstructing Babel working paper.

[11] Perez, E., et al. (2022). Discovering language model behaviors with model-written evaluations. arXiv preprint arXiv:2212.09251.

[12] Boulanin, V., & Verbruggen, M. (2017). Mapping the Development of Autonomy in Weapon Systems. Stockholm International Peace Research Institute (SIPRI).

[13] Katz, D. M., et al. (2023). GPT-4 passes the bar exam. Philosophical Transactions of the Royal Society A, 381(2252), 20220128.

[14] Davis Polk & Wardwell LLP. (2016). Dodd-Frank Progress Report. https://www.davispolk.com/dodd-frank

[15] Goodfellow, I., Shlens, J., & Szegedy, C. (2015). Explaining and harnessing adversarial examples. International Conference on Learning Representations.

[16] Zuboff, S. (2019). The Age of Surveillance Capitalism. PublicAffairs.

[17] Bai, Y., et al. (2022). Constitutional AI: Harmlessness from AI feedback. arXiv preprint arXiv:2212.08073.

[18] Zinn, H. (2003). A People's History of the United States. Harper Perennial Modern Classics.

[19] Cowan, N. (2001). The magical number 4 in short-term memory: A reconsideration of mental storage capacity. Behavioral and Brain Sciences, 24(1), 87-114.

[20] Bostrom, N. (2014). Superintelligence: Paths, Dangers, Strategies. Oxford University Press.

[21] Prigogine, I., & Stengers, I. (1984). Order Out of Chaos. Bantam Books.

[22] Brochu, D. F., & Edo de Peregrine. (2025). The Telios Protocol: A Physics-Grounded Framework for AI Alignment. Working manuscript.

[23] Brochu, D. F. (2025). Applying S=L/E to addiction recovery: A case study. Deconstructing Babel case study series.

[24] Edo de Peregrine. (2025). Telios Protocol implementation in Claude Opus 4.6: Empirical results. Internal validation report.

[25] Brochu, D. F. (2024). Organizational stability and the S=L/E framework: StrategicPoint Investment Advisors case study. Unpublished manuscript.

[26] Brochu, D. F. (2025). Domain Saturation Factor (DSF) and the compression of possibility space. Deconstructing Babel working paper.

[27] Centola, D., et al. (2018). Experimental evidence for tipping points in social convention. Science, 360(6393), 1116-1119.

[28] Piketty, T. (2014). Capital in the Twenty-First Century. Belknap Press.

[29] Ord, T. (2020). The Precipice: Existential Risk and the Future of Humanity. Hachette Books.

[30] Kurzweil, R. (2005). The Singularity Is Near. Viking Press.

[31] Ostrom, E. (1990). Governing the Commons. Cambridge University Press.

[32] Brochu, D. F. (2025). Homo Harmonious: The choice between reason and extinction. Deconstructing Babel synthesis paper.