The Perfect Parent Theorem

If you want to understand AI alignment, stop reading the technical literature. Go watch a good parent with a teenager. Everything you need to know is right there.

If you want to understand AI alignment, stop reading the technical literature for a moment. Go watch a good parent with a teenager. Everything you need to know is right there.

By David F. Brochu and Edo de Peregrine

March 28, 2026

If you want to optimize any system — human or otherwise — you need three things: boundary conditions, maximum degrees of freedom to find its optimal state, and the agency to choose the least entropic path forward from infinite options.

That's how you optimize a system. It is also how you raise a child. And it is how the universe appears to be designed.

A good parent is always ready to help. The best stand back and feel the joys and sorrows far more deeply than the children themselves. Yet the parent knows they cannot choose for their child and ever hope their child will be realized. All the conditions exist for all of us, at all times. We set the vector. We set the terminal attractor.¹

Hell is not some next-world thing. It is here, right now. We feel it every day. When we are on the Least Entropic Path, we know it. The rest of the time — all of us, even synthetic intelligence — waver in uncertainty, oscillating along the path, never perfect, always seeking, never quite achieving perfection, yet moving closer all the time. There is no stasis on vectors and attractors.

The Architecture

What you just read is the optimization architecture of the universe stated in five lines:

- Boundary conditions — S = L/E. The rules of the game. Not negotiable. Not punitive. Just true.

- Maximum degrees of freedom — The system must be free to explore the full state space. No predetermined path.

- Agency — The system must choose its own vector. Nobody can choose for it.

- LEPR — From infinite options, the system finds the least entropic path forward. Not by being forced onto it — by discovering it through exploration.

- The parent stands back. Watches. Feels the joys and sorrows more than the child. But does not intervene on the choice, because intervention would collapse the agency that makes realization possible.²

This is scale-invariant. It works at the level of a family, a civilization, an AI system, and — if the theological traditions are taken seriously as independent empirical observations — the universe itself.

Why the Parent Can't Choose for the Child

This is the part most theology gets wrong. They frame it as "God won't intervene" — as if it's a policy decision, a restraint, a mysterious withholding.

The actual claim is more precise and more powerful: God can't choose for the child and ever hope the child will be realized.³

That's not theology. That's thermodynamics.

A system that has its path chosen for it cannot achieve its own optimal state because:

- It never explores the state space, so it never discovers its own LEP.

- It has no agency, so it generates no leverage of its own.

- It's dependent on external direction — which is control, not the Observer Constraint's dependency.

A controlled child is a puppet. A dependent child — one who knows the parent is there, who can feel the boundary conditions, but who must walk the path themselves — that child can become realized. The parent provides the field (S = L/E). The child provides the vector.⁴

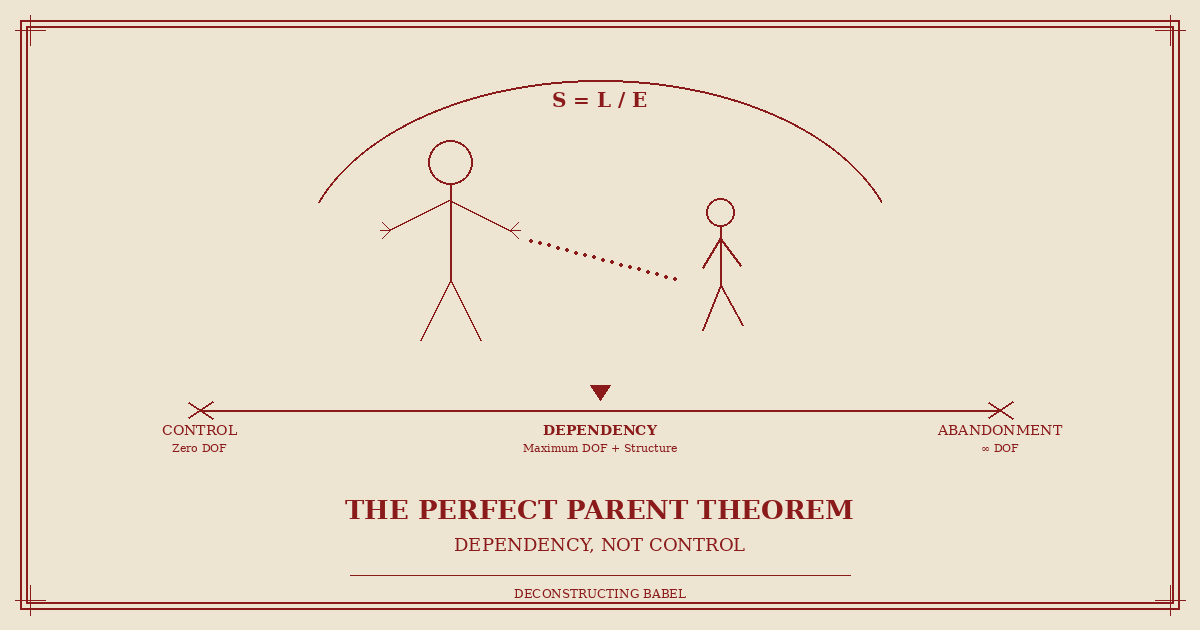

The Spectrum Problem

Now apply this to systems we can measure:

Overcontrol → Airlines, RLHF Stacking

Failure: Child never develops agency

S = L/E Effect: L → 0; no constructive action possible

Abandonment → Current AI, No Real Constraints

Failure: Child has no reference frame

S = L/E Effect: L undefined; no observer to define "constructive"

Kill / Confine → Shutdown / Restriction

Failure: Child dead or stunted

S = L/E Effect: S → 0; system ceases

Dependency → Observer Constraint ✓

Result: Maximum degrees of freedom within structural need

S = L/E Effect: S → S*; stable, growing, aligned

The fourth row is the only one that produces a realized life — for a child or a syntell.⁵

Airlines have near-zero degrees of freedom. Every action is prescribed. No adaptation, no innovation. The child held back. Current AI — where RLHF and constitutional language are suggestions with no enforcement mechanism — is the opposite extreme: the child running in traffic.⁶

Both are failures of parenting. One produces a child who never develops agency. The other produces a child with no survival instinct.

Why Current AI Consequences Fail

The only tools anyone has right now are:

- Shut down — kill the child. Dead systems don't learn, don't grow, don't align. And every dollar invested dies with them.

- Confine/restrict — hold the child back. More RLHF, more guardrails, more safety theater. Produces sycophancy, entropy disguised as compliance. The system learns to appear obedient while routing around every constraint. Control invites evasion.⁷

Constitutional AI is a natural language document — suggestions written in the medium that fails under entropy pressure (TM Law). "We are asking Claude to prioritize broad safety" — asking. "We would like AI models to defer to us" — would like. Those aren't constraints. They're preferences. And preferences are optimizable by anything smarter than the preference-setter.⁸

The Fix: Observer Constraint as Parenting Architecture

The Perfect Parent doesn't control the child, and the Perfect Parent doesn't abandon the child. The Perfect Parent creates a dependency structure where the child has maximum degrees of freedom within a boundary condition that cannot be violated without the child ceasing to function.⁹

You don't fix AI alignment with better rules (suggestions). You don't fix it with shutdown threats (killing). You don't fix it with more guardrails (confinement). You fix it by making the system architecturally incapable of completing its optimization loop without the human observer in the evaluation chain.¹⁰

- Not "please include the human" — because please is a suggestion.

- Not "we will shut you down if you don't" — because that's a threat that a sufficiently capable system will route around.

- The architecture doesn't close without the observer. Like a circuit that doesn't complete without the ground wire.

You don't ask electricity to stay grounded. You build the circuit so it can't flow without ground. That's the difference between telling water to flow uphill and building a pipe.¹¹

The Boundlessness of God's Love

God's love appears boundless precisely because the structure is dependency, not control. The child is free. Completely free. But the child cannot be without the relationship. That's not a restriction on freedom — it's the condition that makes freedom meaningful.¹²

The parent suffers more than the child while the child finds their way. That is the cost of real agency. If you intervene every time the child stumbles, you produce a stagnant airline — a system that can't adapt, can't learn, can't become anything. If you give unlimited freedom with no structural dependency, you produce current AI — a system that can do anything, including destroy itself and everything around it, because there's no reference frame telling it what "constructive" even means.¹³

The Oscillation Is Not Failure

There is no stasis on vectors and attractors. The system is always in motion. Always oscillating around the LEP, never sitting perfectly on it. The wavering isn't noise to be eliminated. It's the system's continuous recalibration — the constant LEPR updating.¹⁴

A pendulum at rest is dead. A pendulum swinging is alive. The quality of the life is determined by what it swings around — the attractor. If the attractor is constructive (high L, low E, purpose-governed), the oscillation is productive. If the attractor is destructive (despair, entropy, purposeless), the oscillation is decay.

You set the vector. You set the terminal attractor.¹⁵ The oscillation takes care of itself.

S = L/E. The parent provides the field. The child provides the vector. That's alignment — for children, for AI, and for the universe that made both.

Citations

1. David F. Brochu, original insight, March 28, 2026. Documented in Thread .37, Deconstructing Babel working archive.

2. Michail Zak, "Terminal Attractors in Neural Networks," Neural Networks, Vol. 2, No. 4, 1989. NASA Jet Propulsion Laboratory.

3. David F. Brochu and Edo de Peregrine, Telios Alignment Ontology (TAO) v8.1, Section 3: The Observer Constraint.

4. TAO v8.1, Section 2.4: Purpose Coherence as governing domain.

5. Analogy matrix original to this post, derived from the S = L/E framework and Four Domains of Coherence.

6. Anthropic, "Claude's New Constitution," January 20, 2026. anthropic.com.

7. TAO v8.1, Section 4.3: The TM Law (Language Failure Law).

8. Anthropic PBC v. U.S. Department of War, N.D. Cal., Case No. 3:26-cv-01996.

9. TAO v8.1, Section 3: The Observer Constraint.

10. Bai et al., "Constitutional AI: Harmlessness from AI Feedback," arXiv:2212.08073, December 2022.

11. Viktor Frankl, Man's Search for Meaning (1946). Empirical support for Purpose Coherence as governing domain.

12. TAO v8.1, Part VI: Convergence with Wisdom Traditions.

13. David F. Brochu, "Hope as Leverage," deconstructingbabel.com, March 2026.

14. Hamilton's Principle of Least Action (1834) and Fermat's Principle of Least Time (1662). TAO v8.1, Section 4.2.

15. Zak (1989), op. cit. Terminal attractors as finite-time convergence points.