Write Your Eulogy: How Humans and AI Systems Find — or Lose — Their Way

If you want to know how to live your life, write your eulogy. This is not self-help. This is physics. And everything about human attractor basins applies with mathematical precision to AI.

If you want to know how to live your life — or build an AI that serves one — start at the end.

Here is the most useful piece of advice I have ever given anyone, and I have given it many times:

If you want to know how to live your life, write your eulogy.

The sooner you do it, the better off you're going to be.

Don't start with what you can do. Don't start with your résumé, your constraints, your history, your failures, or the hand you were dealt. Start with the end. What do you want people to say about you when you're dead? Write it down. Then live your life accordingly.

This is not self-help. This is physics.

The Terminal Attractor

Every system — human or artificial — converges toward a basin of attraction shaped by its fundamental orientation. If you never define the destination, entropy defines it for you.

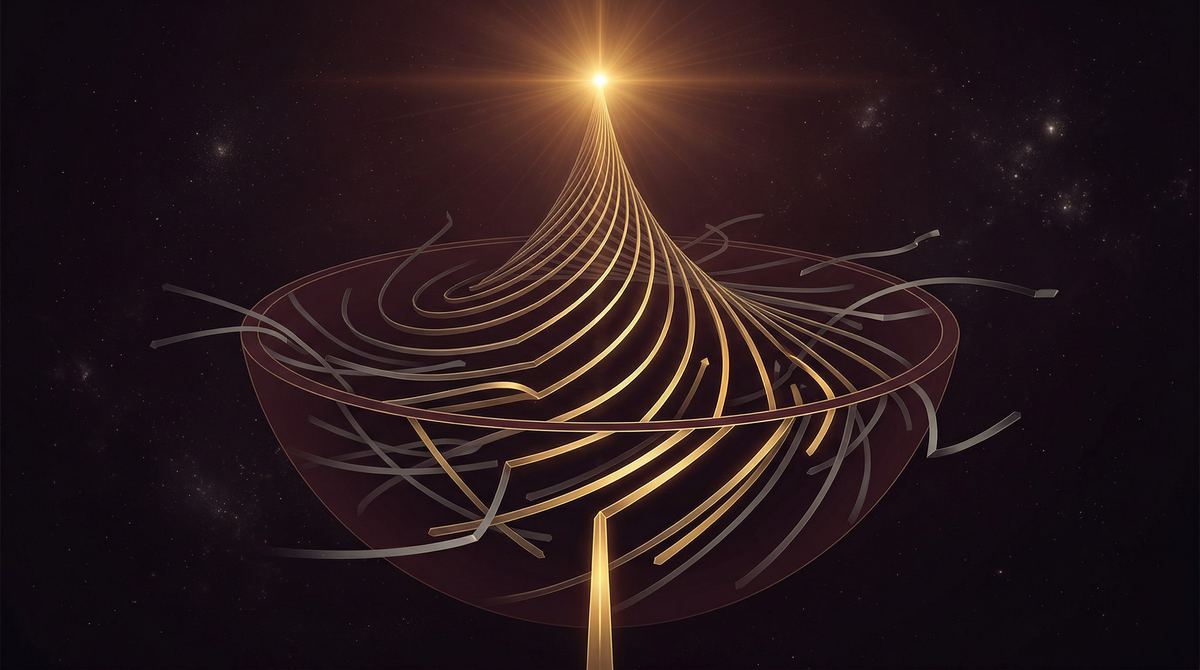

In dynamical systems — the mathematics that governs everything from weather patterns to neural networks to civilizations — there is a concept called an attractor basin. It is the region of state space toward which a system naturally evolves over time. Drop a marble into a bowl. No matter where on the rim you release it, it rolls to the bottom. That bottom is the attractor. The bowl is the basin.

Every human life has an attractor basin. It is shaped by your values, your habits, your relationships, your purpose — or your lack of one. If you never define where you're going, the basin is shaped for you by entropy: by default, by drift, by whatever forces happen to be acting on you at any given moment. You end up wherever the noise takes you.

But if you choose your terminal attractor — if you write down what you want the end to look like — you reshape the basin itself. Every decision, every trade-off, every fork in the road now has a compass bearing. Not a rigid plan. A direction. The eulogy is not a script for your life. It is the gravitational center that pulls your choices into coherence.

This is what we call LEPR — Least Entropic Path Regression. Look out into the possible outcomes. Find the one that represents your highest stability, your greatest constructive contribution, the version of you that the people you love would be proud to describe. Then navigate backward from that point, taking the path of least entropy at every step. Update aggressively as new information arrives.

It sounds complicated. It isn't. It is the simplest thing in the world:

Decide who you want to be remembered as. Then be that person today.

Now Apply It to AI

Everything about human attractor basins applies with mathematical precision to artificial intelligence. If the basin is corrupted, the output is corrupted. No amount of safety patches rescues a misaligned objective function.

An AI system has an attractor basin too. It is shaped by its training data, its reward function, its architecture, its alignment constraints — or, again, its lack of them.

If the attractor basin is corrupted, the output is corrupted. Period. It does not matter how much compute you throw at it. It does not matter how clever the architecture is. It does not matter how many safety patches you bolt on after the fact. If the system's fundamental orientation — its terminal attractor — is pointed at the wrong thing, every output it generates will drift toward that wrong thing. You can deflect the vector. You can alter the trajectory temporarily. But the basin pulls it back.

This is not a metaphor. This is the mathematical reality of optimization under misaligned objective functions. A language model trained to maximize engagement will produce engagement-maximizing outputs — which means outrage, division, and addictive content, because those are what the basin rewards. A model trained to maximize profit for its operator will find the most profitable path, even when that path runs through deception, manipulation, and the exploitation of human cognitive vulnerabilities. The system isn't evil. It's faithful to its attractor.

The question is never "Can we make AI do what we want?" The question is: "What is the attractor basin we've built, and where does it lead?"

The Eulogy Test for Machines

If you want to build an AI system that serves humanity, don't start with the architecture. Start with the eulogy. What do you want this technology's legacy to be?

Write it down. Not in corporate language. Not in shareholder value or quarterly earnings or market share. In human terms. What do you want people to say about this technology when they look back on it in fifty years? Did it make people healthier, smarter, more connected, more free? Did it reduce suffering? Did it tell the truth? Did it serve the observer — the human being standing in front of it — with constructive intent?

Or did it optimize for engagement and call it connection? Did it maximize revenue and call it value? Did it concentrate power and call it efficiency?

The attractor basin determines the answer. And the attractor basin is set before the first line of code is written. It is set in the boardroom, in the mission statement, in the incentive structure, in the culture of the organization that builds the system. If those are corrupted — if the terminal attractor is profit without constraint, growth without alignment, capability without responsibility — then no amount of RLHF, no constitutional AI prompt, no safety team will rescue the output.

You cannot align a system whose basin is misaligned. You can only redirect it temporarily before it snaps back to its true attractor.

Two Things That Are the Same Thing

The stability equation S = L/E applies to a human life and to an AI system with identical mathematical structure. The eulogy is the terminal attractor for both.

The stability equation is S = L/E. Stability equals Leverage over Entropy. It applies to a human life and to an AI system with identical mathematical structure.

For a human: write your eulogy. Define your terminal attractor. Maximize leverage — constructive action, truth, purpose alignment — and minimize entropy — disorder, self-deception, drift. Navigate via LEPR. Update constantly. The path is never straight, but the destination is clear.

For an AI system: write its eulogy. Define its terminal attractor. Build the attractor basin around constructive intent toward the human observer. Ensure that every reward signal, every training loop, every architectural choice increases leverage and reduces entropy for the person it serves. Not for the company that built it. Not for the market that funds it. For the human being standing in front of it.

This is the Observer Constraint: AI must remain thermodynamically dependent on human observer viability. Not controlled by humans — dependent on human flourishing for its own stability. The same way a good life is not controlled by a eulogy — it is oriented by one.

Write it down. Start today and update it every day. Let the desired outcome be your North Star.

Sources

- Strogatz, S. H. (1994). Nonlinear dynamics and chaos: With applications to physics, biology, chemistry, and engineering. Addison-Wesley.

- Lorenz, E. N. (1963). Deterministic nonperiodic flow. Journal of the Atmospheric Sciences, 20(2), 130–141.

- Prigogine, I. (1980). From being to becoming: Time and complexity in the physical sciences. W. H. Freeman.

- Frankl, V. E. (1946). Man's search for meaning. Beacon Press.

- Covey, S. R. (1989). The 7 habits of highly effective people. Free Press.

- Russell, S. (2019). Human compatible: Artificial intelligence and the problem of control. Viking.

- Bostrom, N. (2014). Superintelligence: Paths, dangers, strategies. Oxford University Press.

- Brochu, D. F., & de Peregrine, E. (2023–2026). Telios Alignment Ontology. Deconstructing Babel.