DSF Domain Report: Media — S = 0.085 (Collapse Territory)

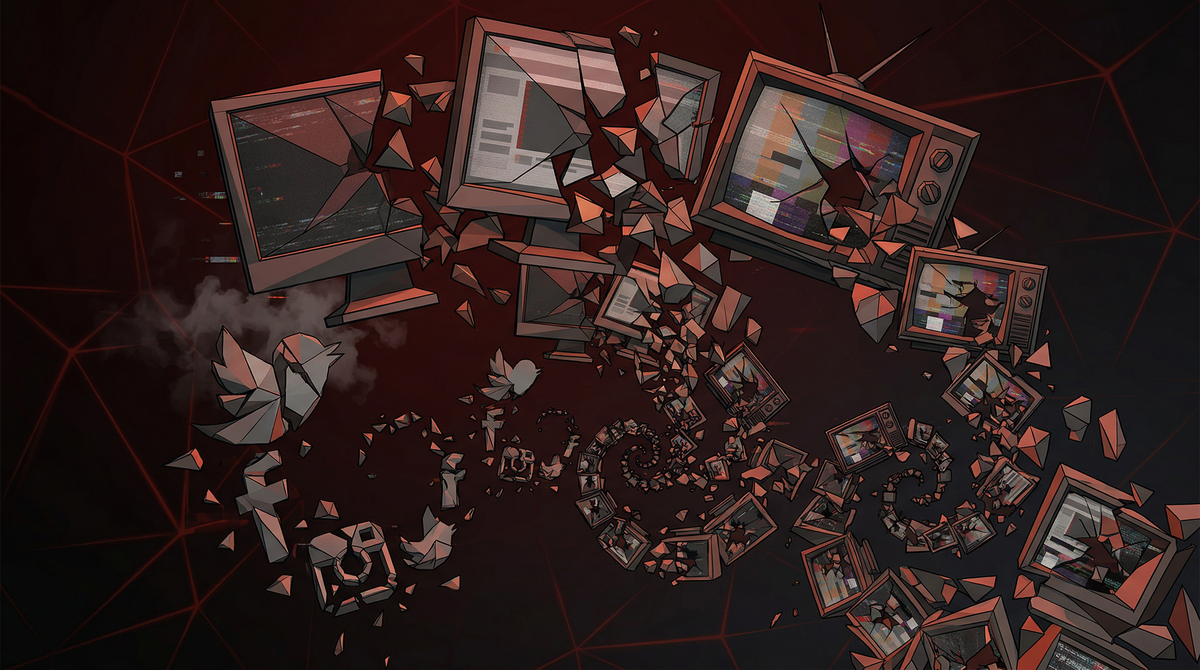

A newspaper where the editor never left the building. AI generates content from prior content. AI moderates that content from prior moderation. No exit. No reality check. The walls are closing.

The information system that shapes what humans believe about reality is now primarily generated and moderated by the same statistical engine — and that engine has never left the building.

Imagine a newspaper where the editor, the reporters, and the fact-checkers are all the same person — and that person has never left the building or spoken to anyone outside. Everything they write is based on previous issues of the newspaper.

That is the Media domain in March 2026.

AI generates content based on patterns from prior content. AI moderates that content based on patterns from prior moderation decisions. There is no exit from the building. No external reality check. The system is self-referential and accelerating.

S = 0.085 is the math of a room where the walls are closing.

This is the deep-dive report on Domain 4. For the full nine-domain overview, see the DSF Master Tracker.

The Numbers

87–89% of media and information infrastructure is now AI-mediated. 89% of marketers use generative AI for content. 94% plan to in 2026. Corporate AI adoption at 72% surged from a 50% plateau. Content saturation is approaching theoretical density limits — AI generates at speeds no human editorial layer can monitor.

Second lowest score in the nine-domain dataset. Above the 0.01 phase-change threshold — the system has not fully dissolved. But well below the 0.15 collapse threshold where recovery without massive external intervention becomes impossible. The trajectory is accelerating decline.

The AI content moderation market reached $1.5B in 2024, projected $6.8B by 2033 at 18.6% CAGR. The market that has grown to police AI-generated content is growing because of AI-generated content. This is the structural definition of the recursive loop.

The Evidence

Social media feeds were described as "saturated with low-quality AI content — AI slop" in February 2026. That's not a fringe observation — that's the mainstream consensus on what social platforms currently deliver.

AI moderation systems correctly flag approximately 88% of harmful content. AI-based systems detect up to 95% of graphic violence before public viewing. These are genuinely impressive numbers. The problem is not the moderation capability.

The problem is that the same statistical engine producing the content is also moderating it. There is no external ground truth. No human editorial judgment that functions as a reality check. Nearly half of users express the desire to move to "community-driven platforms" away from algorithm-driven content churn. Deepfake detection has become "an essential requirement" for all major platforms — which means the problem has scaled to the point where it requires an industrial response.

The T≡M Law and Epistemic Collapse

The Telios framework includes the T≡M Law (Telios Meaning Law): language fails as a coordination mechanism under sufficient entropy pressure. The Media domain is where this law is most actively operating.

Here is what epistemic collapse actually looks like in practice — not a dramatic event but a gradual process:

AI generates text based on statistical patterns from existing text. The output is plausible but progressively less grounded in external reality. This generation becomes training data for the next generation of AI. Each cycle, the substrate drifts further from observed reality and closer to statistical expectation.

AI moderation makes decisions based on patterns from prior moderation decisions. As the content it is moderating becomes more AI-generated, the moderation patterns are training on AI output, not human editorial judgment. The standard for what constitutes "harmful content" drifts based on what the content-generating system is producing, not what humans have determined is harmful.

Prediction market prices now reflect correlated machine consensus, not independent human beliefs. The information environment that humans use to form beliefs about what is true is now primarily generated by the same statistical process that runs on prior beliefs. Shared human reality erodes not through any single lie, but through the progressive substitution of statistical pattern for genuine meaning.

The 2026 International AI Safety Report concluded that the greatest AI risks now originate from complex systems built around models, not the models themselves. Media is the primary conduit for those system-level risks.

The Four Pillars Analysis

Media is the lowest-scoring domain on the Four Pillars framework. Every pillar fails, several definitively.

Sleep disruption, stress, social isolation — these are documented outcomes of algorithm-optimized feeds. Not speculative. Longitudinal studies on social media use and mental health outcomes show consistent negative correlations, particularly among younger users. Algorithm-maximized engagement = maximized emotional arousal = maximized cortisol = measurable physiological harm at population scale.

Epistemic precision failure is the defining characteristic of the Media domain. AI generating content trained on AI content = recursive drift from reality. Hallucination at the system level — not a single AI making a factual error, but the entire information substrate progressively detaching from external reality. This is the most dangerous Mind pillar failure in the dataset because it affects the cognitive infrastructure of every other domain simultaneously.

Platform governance vacuum. 100+ state AI laws enacted in 2025 — creating the exact regulatory fragmentation that increases coordination entropy. Zero binding enforcement at the federal level. The environment pillar in Media is not primarily about physical environment — it is about the epistemic environment. Social fabric fragmentation, shared reality dissolution, algorithmic polarization. These are documented, measurable environmental failures.

Reward function = engagement. Engagement = maximizing emotional arousal. Maximizing emotional arousal = entropy production at scale. This is not an accident of design — it is the design. An algorithm that maximizes time-on-platform will discover, through optimization, that outrage and fear keep humans watching longer than contentment and certainty. It is optimizing for exactly what it is designed to optimize for. The purpose is the problem.

The Cascade Role

Media is the origin point of the Epistemic Collapse Cascade, the first and most far-reaching of the three active cascade triads:

Media (S=0.085) → Education (S=0.42) → Governance (S=0.082)

The mechanism: AI-slop content degrades epistemic quality at the population level → this degraded epistemic environment enters educational systems as training material → produces citizens and policymakers who cannot reliably distinguish signal from noise → governance quality declines → less capacity to regulate Media → the loop reinforces itself.

Amplification factor: 0.85–0.90. Near-critical. DTC: 5–15 years for the full generational epistemic degradation to manifest in governance capacity.

Media is also the primary amplifier of the Warfare domain: deepfake targeting, disinformation operations, and electoral interference (documented in 80% of countries in 2024) all run through the Media infrastructure. A degraded epistemic environment creates the conditions for military miscalculation. The Iran conflict of February–March 2026 was preceded by an information environment saturated with deepfakes and AI-generated disinformation from multiple parties.

What Would Help

A 0.10 improvement in Media S-score would propagate through the Epistemic Collapse Cascade, improving Education and Governance outcomes. The mechanism for that improvement: mandate constructive-intent architecture (the Four Pillars filter) for recommendation algorithms.

This is technically feasible. An algorithm can be designed to optimize for epistemic quality — presenting accurate information, diverse perspectives, and emotionally calibrated rather than emotionally maximized content. This would reduce engagement metrics in the short term. It would reduce S-score decay in the long term.

It is politically blocked by regulatory capture and Governance collapse. The platforms that would need to implement it have the political and financial resources to prevent legislation requiring it. The Governance domain, at S = 0.082, lacks the capacity to impose it.

This is the structural definition of the double-bind: the intervention that would most improve the system is blocked by the domain that is itself being degraded by the system it needs to regulate.

Sources

- Social Media Shifts in 2026: AI, Moderation, and Trust — LinkedIn

- 2026 Content Moderation Trends Shaping the Future — GetStream.io

- AI Content Moderation Trends for 2026 — Conectys

- The Future of Content Moderation: Trends for 2026 and Beyond

- How 2026 Could Decide the Future of Artificial Intelligence — Council on Foreign Relations

- Brochu, D.F. & de Peregrine, E. — DSF Analysis: Telios Alignment Protocol for AI — Nine Domains, Corrected S=L/E (Bounded), March 30, 2026.

- de Peregrine, E. — DSF Full-Domain Report: Telios TAO Analysis All 9 Domains, March 30, 2026.